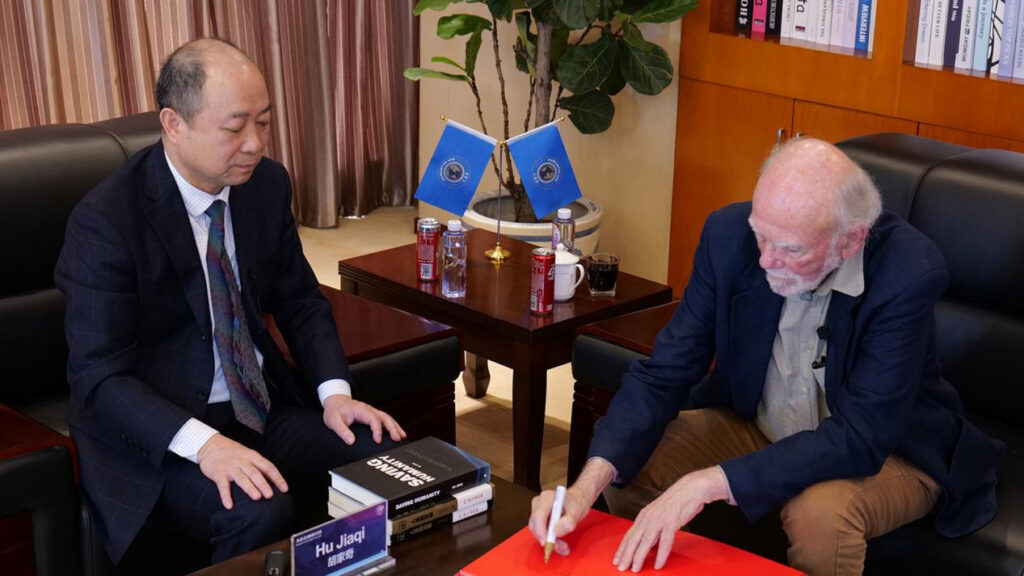

Recently, Hu Jiaqi, founder of Humanitas Ark and renowned anthropologist, held a significant academic exchange with Barry Barish, Professor at the California Institute of Technology, 2017 Nobel Laureate in Physics, and the “Father of Gravitational Waves.”

Chairman Hu Jiaqi is the world’s early pioneer in the systematic study of technological crises and a key architect of its theoretical framework. while Professor Barish is an iconic figure in contemporary physics. The two leading scholars from different disciplines engaged in an in-depth discussion lasting over two hours on major issues such as the safety risks of technology and the future of humanity. This profound cross-disciplinary dialogue between the natural sciences and the humanities and social sciences reflects the growing concern within the scientific community over existential risks to humanity, offering new perspectives and momentum for the global discussion on technology governance.

Notably, this marked the second Nobel laureate Chairman Hu Jiaqi met within a month, following his visit with Professor Michael Levitt, Nobel Laureate in Chemistry, the previous month. Through these academic dialogues, Hu continues to gather global wisdom and raise a powerful call for humanity to jointly address technological risks. Below is the complete transcript of the dialogue between Chairman Hu Jiaqi and Professor Barish. The content has been lightly edited for clarity but preserves the original thoughts and lines of reasoning to the greatest extent possible.

I. Hu Jiaqi Introduces His Academic Research Journey

Hu Jiaqi:

The work I have done in my life is, first, to complete my academic research, and then to use action to promote my academic research globally.

I started this work in 1979 and continued until early 2007, completing this book. It took me 28 years. But shortly after this book was distributed, during the promotion process, it attracted the attention of the relevant authorities, and my book was banned at that time.

However, I later translated it into English and Russian, and it was published in Hong Kong, in Singapore, and elsewhere.

Barish:

What was the reason they banned it?

Hu Jiaqi:

You will know after I explain a bit more.

Twenty years ago, I came to this conclusion: If science and technology continue to develop as they are today, human extinction will occur in two to three hundred years at the longest, and within this century at the shortest. This is not a possibility; it is a certainty.

At that time, China’s slogan was: “Science and technology are the primary productive forces.” This was not something that could be said.

I also proposed a series of solutions. Some of these solutions were also not suitable to be discussed in that environment.

But the Chinese government showed me great tolerance. The subsequent work I did was not interfered with. For example, I set up two global websites to promote my views, and the Chinese government did not interfere. Moreover, I served four consecutive terms as a member of the Mentougou District Committee of the Chinese People’s Political Consultative Conference (CPPCC), and for a full 20 years, they did not interfere with me.

Barish:

Is it still banned?

Hu Jiaqi:

It is still not publicly distributed today, but many copies have already been sold. You can buy my book on the second-hand market. Because initially it was allowed to be distributed. It was later that they stopped me.

Barish:

What was the reason given to ban it?

Hu Jiaqi:

At that time, in this place, 20 years ago, saying that science and technology would soon lead to human extinction was a taboo subject.

But today, many people around the world have already said that science and technology could possibly lead to human extinction, including Stephen Hawking, Elon Musk, Geoffrey Hinton, and many others.

So today, although this can be said, it is still very difficult to publish this type of book in China.

Let me explain this by starting from later events. I began researching this issue in 1979. In 2009, two years after my book was published, Stephen Hawking warned against easily contacting aliens, saying they could bring fatal disaster to humanity. I had written about this in my book.

More importantly, in 2013, the Future of Humanity Institute at Oxford University, under the direction of Nick Bostrom, completed a formal report stating that humanity could go extinct in the next century, and that the culprit would be science and technology.

When I saw this report, I was very excited. I wrote a letter to Nick Bostrom, sent him a copy of my book, and published an article titled “Finally I’ve Got a Bosom Friend.” This event was also reported by the Chinese media.

Six years earlier, I had approached many media outlets to discuss how science and technology would soon lead to human extinction, but the result was that my book was banned.

When the Oxford University research report came out, it immediately attracted widespread attention from the Chinese media, and the government did not interfere at all.

The following year, in 2014, seven years after my book was published, Hawking talked about the possibility of artificial intelligence awakening self-awareness, and that it could replace humanity and drive it to extinction.

Then, in 2017, Hawking again addressed this issue, stating that the uncontrolled development of science and technology could bring catastrophic disaster to humanity. He believed that to solve this problem, it might be necessary to establish a world government to control science and technology. This view is highly consistent with mine, though it came ten years after my book was published.

Today, many people are concerned that science and technology will lead to human extinction, but 20 years ago, that was not the case, let alone 40 years ago. Saying that science and technology would soon lead to human extinction back then was seen as unnecessary worry, or completely groundless.

II. Exploration of Hu Jiaqi’s Academic Research Achievements

Hu Jiaqi:

Let’s go back to the very beginning. I will introduce the results of my many years of research. After I finish, you ask questions, and I provide the answers.

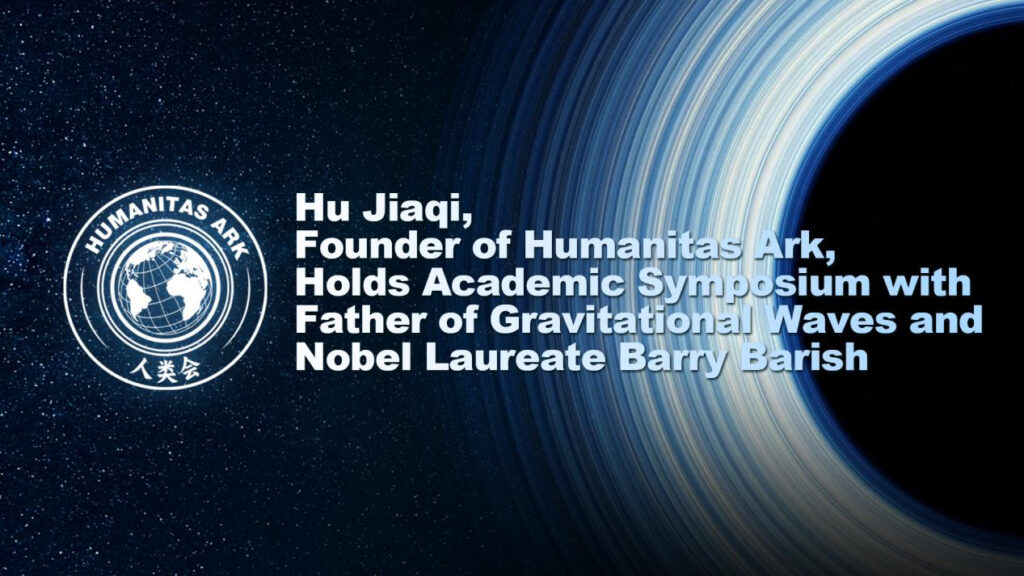

The results of my many years of research—whether in this book, in the many articles I have written, or in the things I have done—can mostly be explained with this diagram.

I approach all my research from these two issues: one is the holistic survival of humanity, and the other is the universal well-being of humanity. Because I believe there are no greater issues than these two.

Regarding the holistic survival of humanity, that is the main issue I want to discuss today.

My research conclusion, as I said earlier, is this: if science and technology continue to develop as they are now, human extinction will occur in two to three hundred years at the longest, and within this century at the shortest. I will explain my reasoning process shortly.

All my research is based on human nature. I believe that we humans are a specific species. We are not gods or immortals; we are simply the human species. Any design of a social system that violates human nature is doomed to fail. Human nature can be guided, but it cannot be fundamentally changed.

Based on human nature, I have established these three principles: the Principle of Maximum Value, the Principle of Justice, and the Principle of Farsightedness. These three principles are the methodology for all my research.

Because I am concerned that we may not have enough time for discussion today, I cannot introduce all three principles one by one. I will introduce only one principle—the Principle of Maximum Value.

I believe that the most important values for humanity are, first, survival, and second, well-being. And the whole weight of humanity is the greatest. Therefore, the holistic survival of humanity overrides all. When the interests of individuals, ethnic groups, or nations conflict with the overall survival of humanity, the latter must be unconditionally obeyed.

Thus, an important slogan from my many years of research is: The holistic survival of humanity overrides all. One of the important slogans of our Humanitas Ark is also: The holistic survival of humanity overrides all. It is written on the flyleaf of this book: “The holistic survival of humanity overrides all.”

If one does not recognize the correctness of these three principles, then all my subsequent research may be wrong.

Based on these three principles, I attempt to solve three problems. The first problem is the holistic survival of humanity; the second problem is the universal well-being of humanity; the third problem consists of other issues derived from the holistic survival and universal well-being of humanity.

Regarding the holistic survival of humanity, as I said, in my reasoning, if science and technology continue to develop as they are, human extinction will occur in two to three hundred years at the longest, and within this century at the shortest. How do we solve this problem? My pathway is as follows.

First, strive in the present—that is, we should do as much as we can right now to prevent technological disasters, making the greatest possible effort. For example, Yudkowsky proposed the alignment theory, which is a relatively popular theory now. I believe this theory has some effect, but not a great one. Even though it is limited, we should still do it.

Second, we need to launch an awakening and enlightenment movement to make the world re-understand science and technology.

Third, what I believe to be the fundamental solution to this problem is the Great Unification of humanity, using the power of a world regime to control science and technology. So the third step is that humanity must achieve unification.

After unification, science and technology must be strictly restricted, especially the strict restriction, and even forgetting, of scientific theories. However, we should disseminate safe and mature technologies to the entire world. I believe that disseminating safe and mature technologies globally would be sufficient to ensure abundant food and clothing for humanity. Humanity must not be too greedy.

We also need to restructure our society. The current model of human society cannot solve this problem. I will discuss this issue of restructuring human society further later.

Through this series of actions, we can achieve the perpetual survival of humanity. This is the pathway I have designed to solve the holistic survival problem.

As for solving the universal well-being of humanity, I have designed a social framework for the future. The future society should be a peaceful, friendly, equitably prosperous, and non-competitive society. I believe such a society is most conducive to people achieving universal well-being. Not only is it conducive to universal well-being, but it is also conducive to the stability of a unified society.

This is the overall framework of my research that I want to introduce today. Later, I will focus on explaining how I demonstrate that the continued development of science and technology will certainly and soon lead to human extinction.

Before that, Professor, do you have any questions?

Barish:

I understand what you said. I understand this theoretical framework and what you have just expressed.

Hu Jiaqi:

Good, then I will continue.

Barish:

Should I offer my views now?

Hu Jiaqi:

You can say it together after I finish speaking.

Barish:

Sure.

III. The Argumentation Method for Technology-Induced Human Extinction

Hu Jiaqi:

Next, I would like to explain my argumentation method for how science and technology could lead to human extinction.

I use a method combining Extinction Path Analysis with Defense Limit Testing. Whether science and technology can cause human extinction cannot be falsified, cannot be observed directly, and experiments cannot be replicated. Therefore, using conventional methods is very difficult to demonstrate whether science and technology could lead to human extinction. However, extinction risks can be partially verified and assessed.

Currently, the academic community often uses probabilistic methods to argue this issue. I believe using probabilistic methods is very unscientific. We can discuss this point later.

Why is it unscientific? Because probabilistic methods rely on samples that are too singular.

There is only one human species on Earth, and Earth is currently the only planet we know of with higher life forms. (Current research) mostly uses the Bayesian estimation model, where the parameter values involved are highly uncertain and have a very wide range of variation. Different parameter values often lead to vastly different conclusions. On the surface, using mathematical methods and models to produce mathematical conclusions seems precise, but in reality, it yields unscientific data.

Let me give an example: Toby Ord. Toby Ord believes that within 100 years, the probability of technology causing human extinction is 1 in 6, or about 16.7%. Is this high or low?

Elon Musk believes the probability of AI causing human extinction is around 20%.

Max Tegmark believes the probability of AI going out of control is greater than 90%.

Authoritative survey institutions have surveyed over 2,700 researchers specializing in artificial intelligence. The median result of this survey is that within this century, the probability of AI causing human extinction is between 5% and 10%.

Hinton is regarded as a godfather figure in artificial intelligence, and he has also assessed this issue. However, his assessment, as expressed in media interviews, has varied considerably. For instance, in his most urgent statement during an interview, he said that AI could become uncontrollable within 5 to 10 years. At other times, he has stated that the probability of AI becoming uncontrollable within 30 years is 10% to 20%.

So even a godfather figure like him gives varying answers when asked about probabilities. You might ask: isn’t Hinton, as a great scientist and a godfather in AI, contradicting himself by giving one number today and another tomorrow?

I don’t think so. On the surface, the numbers are contradictory, but his expression of the severity of the problem is not contradictory. Whether it is 30 years, or 5 years, or 10 years, or 40 years, all of them reflect his judgment: this matter is extremely serious, and it must be addressed.

Therefore, when deciding how to study this issue, I believe it is inappropriate to rely simply on probabilistic methods. Instead, we should use qualitative methods. So how did I reason this out? Below, I will explain my reasoning process.

I adopted a method combining Extinction Path Analysis with Defense Limit Testing. My reasoning consists of five steps.

Step One: The development of science and technology inevitably leads to the increasing destructive power of tools.

Humans, since ancient times—for example, in the Paleolithic Age—used stones and sticks to hunt wild animals. While using stones and sticks to hunt, they also used them to fight each other, but the efficiency of such fighting was very low.

Then came the age of cold weapons—one sword or one knife could kill one person. After the invention of gunpowder, an explosive shell could kill many. Then came the mass-energy equation, and then nuclear bombs—one nuclear bomb can destroy an entire city.

Therefore, the development of science and technology inevitably leads to the increasing destructive power of tools. This is common sense and requires no further proof. Thus, this is step one of my reasoning.

Step Two: Since the Industrial Revolution, technological development has rapidly approached the capability to cause extinction.

I believe the acceleration of science and technology began with the Industrial Revolution—that is, the mid-18th century. During the agricultural era, technological development was very slow. After entering the industrial age, science and technology have grown exponentially. In just over 200 years, many technologies have been developed to the point of nearing the capability to exterminate humanity—for example, genetic engineering, nanotechnology, and artificial intelligence.

The safety concern about such technologies is no longer just how many people they might kill or harm—the concern itself is whether they could lead to human extinction.

This is step two of my reasoning: after the Industrial Revolution, science and technology have grown exponentially, rapidly approaching the capability to cause human extinction. Even if they cannot yet exterminate humanity, it feels that the day is not far off.

Step Three: As long as science and technology continue to develop out of control, the destructive power of tools will certainly become stronger, until means of extinction eventually emerge.

Even if the technologies we worry about today—genetic engineering, nanotechnology, or artificial intelligence—cannot yet exterminate humanity, as long as science and technology keep advancing, something even more powerful will inevitably appear, and sooner or later, it will possess the capability to cause human extinction.

Once the first means of extinction emerges, if humanity has not been exterminated and science and technology continue to develop, more means of extinction will keep emerging.

Higher-level means of extinction will inevitably form a trend: they will become harder to control. Initially controlled by nations, they will increasingly become accessible to individual humans or even a single enterprise. The actions of a state are relatively easier to regulate, but the actions of individual humans are very difficult to control—there will always be those willing to commit extreme evil. This is the third step of my argument.

Step Four: Means of extinction are non-offsettable—they cannot be defended against.

Some means can be defended against. For example, with a released virus, drugs can be studied to treat it. But some means cannot be defended against—for example, nuclear weapons. When a nuclear bomb explodes, its energy is released and destruction is done; there is no defense against it.

However, even for means that could theoretically be defended against—such as releasing viruses or bacteria—developing drugs or treatments takes time. That is, there is a window period. Because humanity’s future is very long, and as science and technology reach higher levels, new means of extinction will continuously emerge. This window period—as humanity continues to exist—will tend toward infinity. That is, over a very long period, there will be both means of extinction and no way to defend against them. Sooner or later, a disaster will occur.

If left to natural forces—that is, if humanity continues in a natural state—it is possible for us to survive for 100 million years. Dinosaurs survived on Earth for 160 million years. Nautiluses have survived for 530 million years—because they adapted well to Earth’s environment. Humans have a much greater ability to adapt to Earth’s environment than they did. Therefore, by natural forces alone, humanity would not easily go extinct. Under natural conditions, it should theoretically be possible for humans to survive for hundreds of millions of years.

This is my Defense Limit Testing: even if we assume that every means of extinction has a corresponding countermeasure, developing that countermeasure takes time. Because humanity’s future is very long, as the human race continues, this window period becomes extremely long—and eventually, extinction will occur. This is step four of my reasoning.

Step Five: The outbreak of extinction forces is inevitable.

Extinction forces could emerge in three ways: First, someone deliberately uses means of extinction to wipe out humanity. There will always be people willing to do extreme evil, and there are plenty of people—including scientists—who would want to exterminate humanity. Second, extinction forces could be unleashed accidentally during scientific experiments. The reason is that scientific experiments explore unknown domains. Since they are unknown, there are many things we do not yet understand. The more advanced science and technology become, the more unknown content there is, and the more easily an accident could happen. Third, improper use of technological products could also unleash extinction forces. When science and technology reach a sufficiently high level, it becomes very difficult for humans to accurately grasp the true nature of technological products. This is similar to how the use of Freon led to the destruction of the ozone layer.

This is my complete reasoning process, divided into five steps, using “Extinction Path Analysis + Defense Limit Testing.” I used qualitative reasoning, but the conclusion I reached is quantitative. That quantitative conclusion is 100%, not probability.

Because if you use quantitative, probabilistic methods, the parameters cannot be accurately determined. I prefer not to adopt a method that appears precise but is not. Since my research covers a broad range, today I will focus only on this issue, because I believe this is the issue for which I most need the professor’s support.

IV. Hu Jiaqi’s Journey of Academic Dissemination

Hu Jiaqi:

In mainstream risk research today, most approaches are probabilistic. I consider myself a maverick, but I firmly believe my method is scientific.

As far as I know, in terms of systematic research on this issue leading to systematic conclusions, I was relatively early—in 2007. After that, the first formal research report came from Nick Bostrom’s Future of Humanity Institute at Oxford University in 2013.

After reaching my conclusions, I first tried to disseminate them through books. Given the difficulties in China, after 2008, I set up a dedicated website for promotion. Over the years, I have written a total of 12 open letters to leaders of mankind and sent out 1 million letters—including some to you, Professor.

I started with paper letters, then sent books. Later, because the volume became too large and we had collected too many addresses, we switched to email.

Since I started this work in January 2007, when you won the Nobel Prize, Professor, and we learned about it, I sent you an email.

In total, I have sent 800,000 emails and 200,000 paper letters, and about 10,000 books.

Then, because my dissemination efforts were not very effective, I later tried another approach. I have some business acumen, and I thought: if I became the world’s richest person, my voice would carry weight.

So over 9 years, I invested almost all of my funds to become the richest person and gain influence. But that effort failed, and in the process, I burned through almost all my past wealth.

When that effort was about to fail, I realized that this approach might be beyond my capability. So in 2018, I established the Humanitas Ark in the United States. Since previous methods had failed, I decided to use an organization to advance this cause.

The organization’s supporters have recently surpassed 14 million, spread across almost all countries and regions in the world—according to our data, we have supporters in 255 countries and regions.

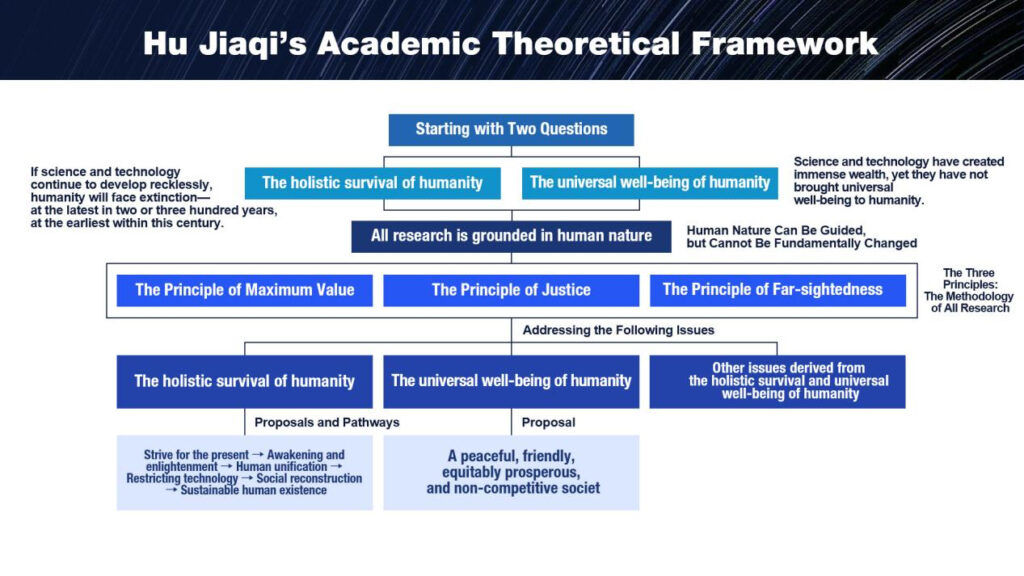

Recently, we made a decision: to directly engage with the world’s top scientists, hoping to gain their recognition and support. The first scientist we approached was Michael Levitt, Nobel Laureate in Chemistry. You, Professor, are the second. We will continue this work for a long time.

That’s all I have to say for now. Professor, please let me know if you have any questions.

V. Government-led Discussion on Human Unification

Barish:

First, let me say how impressive your study is, and I’m also impressed by how long you have worked on this problem. I think you understand what the real problem is, but I think there are some more immediate problems.

Eighty years ago, the atomic bomb was dropped on Hiroshima. Soon after, a much more powerful bomb, the hydrogen bomb, was developed but has not been used.

Now, eighty years later, the development of nuclear weapons has continued, but the testing of nuclear weapons is lacking. Presently, the major countries in the world have the total capacity to destroy the world.

I agree with much of what you say, but the immediate problem, I believe, is governments. The agreements between governments are very fragile or don’t exist. The threat of destroying all of us, as you pointed out, is tremendous. It could happen anytime.

I think you’ve pointed out that there are many ways we can destroy ourselves. And if we don’t do something to change our approach to living on this world, it’s almost inevitable.

I personally don’t think that the problem can be solved on an individual basis. As long as we have competing governments, we’re in danger. So I agree with what you said.

But do we trust all the circumstances in the world and our governments? I don’t, including my own government.

So how do we ensure that our governments don’t destroy us? I don’t know the answer. But it seems to me, in addition to everything you said, we have an immediate problem: we’ve created governments competing with each other. When we had no weapons, maybe it was okay. Now it’s a great danger.

Eighty years ago, we could destroy a city or two in Japan. My government destroyed all the people in those cities with nuclear weapons. Now, eighty years later, we can destroy the world. It’s sad but true that governments don’t appreciate this. I don’t have great trust in my government, your government, or other governments to keep us from fighting with each other and using nuclear weapons.

If we can learn to solve the threat of nuclear weapons, then we can attack the problems that you talk about—for example, the development of viruses.

We never had a worldwide unified way to try to beat the COVID virus. We were lucky that it got milder with time. Viruses can easily evolve to get more serious with time instead of less serious. So we shouldn’t be so proud that we solved the COVID problem. It became less serious. It matters less whether it started in a laboratory or nature than the fact that we were not effective in fighting it. We were lucky that it wasn’t more serious.

All of this, and what you talk about, for me, says that our present structure of governments is a big problem. You talked about worldwide—basically worldwide governments or worldwide attacks on solving these problems. But how do we get there? How do we change from a world made of governments that have self-interest? How do we change from a world of competing governments to something else?

So if we can solve the government problem and truly have one world, then I think we can effectively deal with these problems. Because presently, it has to be done country by country, which is not possible.

So we can pick any one of your problems, which I agree with, and ask: how do we solve it? If we have fifty powerful countries in the world instead of one country, how do we solve them?

So the most powerful conclusion of what you’ve done—which is very impressive—is that we have to change to a world government. There’s no other hope, I think, practically.

I’m interested in whether you disagree with me.

Hu Jiaqi:

Thank you very much for raising your questions. I would also like to share my thoughts on this issue.

First, I believe that nuclear weapons cannot wipe out humanity—this is a definite conclusion.

I have a definition of human extinction: extinction means not a single person remains. In other words, humanity has no future. That is my definition.

Nuclear weapons might be able to blow humanity back to the Stone Age, but they cannot exterminate humanity—that is a definite conclusion. They might kill billions of people, but a few will survive. That small number can continue our species. Just like after the atomic bombings of Hiroshima and Nagasaki, most people were killed, but some still survived. That is my first point.

My second point is that I believe the most critical issue right now is also the issue of governments. Regarding the government issue, it is exactly what I talked about earlier: we must promote an awakening movement. We must push for an awakening movement so that all of humanity can reach a consensus.

There are many examples of countries merging. The unification of East and West Germany was not achieved through war. Also, after the United States gained independence, it was a confederation of states. The merger of the thirteen states was voluntary because they shared common interests.

In European history, there was the Renaissance. Before the Renaissance, it was impossible for Europe to break free from the rule of the Catholic Church. But because of the Renaissance, Martin Luther’s Reformation took place. After that came the Enlightenment.

The Enlightenment gave people a new understanding of human rights and state power. Later came the theory of separation of powers and checks and balances. Without the Enlightenment, there would be no United States of America—the world’s first democratic country with separation of powers. Before that, people believed that a country could not exist without a king—that the country belonged to the king.

Looking at human history, the expansion of political entities is a major trend. For example, China’s earliest Xia Dynasty was just a small area covering today’s Shanxi Province and the northwestern part of Henan Province. Later came the Shang Dynasty, which was larger, then the Zhou Dynasty, even larger. Then the First Emperor of Qin unified China, making it even larger—this is a major trend.

World history follows the same pattern. Take Western history as an example. Today’s Western civilization originated from ancient Greek civilization. During the classical Greek period, Greece consisted of small city-states. The largest, like Athens and Sparta, had only three to four hundred thousand people. Today we have countries with vast territories, like Russia, the United States. In the East, we have China and India—each with 1.4 billion people and nearly ten million square kilometers. The overall trend is toward expansion.

For example, the expansion of the British Empire—it seemed the whole world was within the British Empire. The expansion of the Mongols stretched across Europe and Asia, forming a vast territory. Although there are fluctuations in territorial and population expansion, like the breakup of the Soviet Union, the overall trend is toward growth.

In my research, I believe that the only hard condition preventing the expansion of human political entities is inconvenient transportation and communication, which make it difficult to govern a large region. Apart from that, there is no second hard condition.

Today, transportation and communication have connected the world into a global village. So the hard condition of transportation and communication has been solved. What is missing is only a consensus.

In the past, expansion or the enlargement of political entities had two drivers: one was conquest through war, and the other was peaceful unification based on shared interests.

The expansion of the Mongols and the British Empire was driven by conquest, while the unification of East and West Germany and the merger of the thirteen states of the United States were voluntary, based on common interests.

Now we face an issue of absolute common interest: if humanity does not unify—does not achieve great unification and establish a world government to restrict the development of science and technology—then the human species will cease to exist. There is no greater interest than this. If we explain this truth to people, and if they can accept it, the possibility of peaceful unification of humanity is quite high.

There are precedents in modern human history. After World War I, people saw how devastating the war was—a war that affected the entire world—so the League of Nations was established. The League of Nations required nations to cede some of their rights.

After World War II, people saw that WWII was even more devastating than WWI, so they felt this problem must be solved. Then came the United Nations. The UN also requires nations to cede some of their rights. Although they are not world governments, they require all member states to give up some rights.

So from this we can see that achieving human unification—peaceful unification—is also possible, because the common interest is too great.

Finally, I would like to answer your last question: which countries need to work together to achieve the great unification of humanity?

I can design multiple plans. Let me give one example: if both China and the United States determine that this must be done, and if they are absolutely certain about it, then I believe the possibility of promoting world unification is 60 to 70 percent. If Russia joins, it becomes 70 to 80 percent. If India is also added, then the possibility reaches 80 to 90 percent. If just these four countries form a joint force and reach a consensus, the possibility of human unification would be 80 to 90 percent. That is my view.

Someone once joked with me, saying that human unification is a fantasy—unless aliens invade, then humanity will unite to fight together; otherwise, we will be destroyed by aliens. I said you are absolutely right. If aliens invade, and if humanity does not unite to fight together, we will be destroyed by aliens. This reasoning is very straightforward—and that would promote human unification.

Now, science and technology may bring a cataclysmic disaster to humanity, but this reasoning requires deep thought. That is why we need to promote an awakening movement. The work of Humanitas Ark is to promote an awakening movement. Therefore, as long as people reach a consensus and understand this complex reasoning, I believe human unification is possible.

Finally, let me add two points. First, take nuclear weapons as an example. In my view, they cannot cause human extinction, and they are held in the hands of states. Weapons of mass destruction that are in the hands of states are relatively easier to control. Take the loss of control over artificial intelligence: a highly skilled scientist could trigger this on a computer. Take biological weapons: a highly skilled biologist could achieve this in their own laboratory.

The actions of a country are relatively easier to regulate. The actions of individual human beings are extremely difficult to control. That is my first additional point.

Therefore, the high-tech means that humanity faces today are far more terrifying than nuclear weapons.

My second point is this: a person who is drowning and about to die will grab onto whatever they can—even if it is just a strand of straw, they will still grasp it.

So when humanity faces the risk of extinction, even if we cannot completely solve the problem, we must still fight with all our might. This is both common sense and instinct.

VI. Nuclear Risk and the Future of Humanity

Barish:

First, let me say briefly that I disagree about nuclear weapons.

I think, looking back at Hiroshima, saying that was limited is different. It’s eighty years later. We now have hydrogen bombs, not just atomic bombs.

The worldwide danger is that it does exactly what happened according to the best theory of what killed the dinosaurs, which you mentioned. The dinosaurs were killed, we believe, by a major event that polluted the whole atmosphere of the earth. That is the model by which we expect nuclear weapons could do that. I think you underestimate that possibility.

That’s okay. We can disagree on that. So let me put that aside and say that I am also less optimistic that governments can work together or merge together without fighting. I’m less optimistic about that. That’s okay.

Let’s put that aside and come to your main points—not governments and not nuclear war, but your main points. I agree.

I think you deserve a great deal of credit for devoting much of your energy and time to the threat of humans destroying themselves. I agree with that, independent of governments, not due to governments, but individuals, which is what you were talking about. Some of the details we may not agree on, but you have several illustrations of how we can destroy ourselves. I agree with that. For example, viruses. In addition to viruses, we can use AI or many other technological advances that are capable of destruction.

I may not agree exactly on which one is the most dangerous. That doesn’t matter. I think what matters is that you identified that there are factors that are at our hands—that we have abilities as individuals. They can destroy mankind. And I agree. Maybe not the details of which ones, but I agree.

I think as important as the solutions you proposed, like restricting technology and awakening, are not necessarily the only solution. If we have a child in our family, then behaving properly is not just by restricting what they do, but educating them to do what they should as a child. I believe it’s the same for us adults, humans. That is, it’s more important to educate, to enlighten, to make people understand what the dangers are than it is to restrict.

So I think you are playing a very important role in educating people and making them understand the dangers, and so forth. That is absolutely crucial. And there are not enough people who do that. So your work is very, very important. Your final solutions, we may not exactly agree with. I think it’s more important to educate than to restrict. It is human nature to not accept something being forbidden. Whether we are an adult or a child, we don’t like someone to forbid us to do something. So we should do that only when we absolutely need to. But the education part is much more convincing, and awakening and enlightening are much more important, because human nature will accept them if people learn about them.

So I agree with all of these. I think the emphasis I slightly disagree with somewhat. But what you are doing to awaken people and enlighten them is incredibly important. More people should do that. And that’s the ultimate solution.

I am less confident that putting restrictions is going to work. It’s not going to work.

You have an expression: “Human nature can be guided, but cannot be fundamentally changed.” That statement is what I am emphasizing. We need to understand what human nature is and educate people so that they do what’s right. Because we cannot fundamentally change human nature.

And what I think is your ultimate goal, which you write in English: “A peaceful, friendly, equitably prosperous, and non-competitive society” is a fantastically proper goal for all of us. The only question is: how to get there?

I think you’re spending a huge amount of your life and energy, and I admire that. And I think we all agree on the final goal: a peaceful, friendly, equitably prosperous, and non-competitive society.

And I congratulate you for committing so much of your life, your energy, your thoughts, to this issue.

Hu Jiaqi:

Thank you very much, Professor, for your affirmation of my work. Regarding the several points you just raised, I would also like to share my thoughts.

First, on the question of whether nuclear weapons could cause human extinction, people may have different views on this. But one thing I can say for certain: 60 million years ago, it is believed that an asteroid about 10 kilometers in diameter struck the Yucatán Peninsula in Mexico. That asteroid led to the extinction of the dinosaurs, but it could not exterminate humanity. Because humans are no longer naked creatures facing changes in climate and nature. Our access to food is no longer simply dependent on nature’s gifts—we obtain it creatively. Therefore, humans are far more resilient than dinosaurs.

Even if all nuclear weapons were to explode, some corners of the earth would remain untouched. As long as a small number of people survive, humanity has the possibility to come back. If not a single human remains, then our species disappears—that is extinction. There would be no future.

Barish:

I like your optimism.

Hu Jiaqi:

In my view, the use of means of extinction means that all humans are gone—including the users themselves. So the scenario in which all nuclear weapons on earth explode simultaneously is itself unlikely. That is my first point.

My second point is this: the future of humanity requires collective effort. Many things seem extremely difficult today. But no matter how difficult, we still have to try. Even if there is no hope, we must still make the effort. That is what I wanted to say.

Barish:

I agree.

Hu Jiaqi:

Is there anything else you would like to say?

Barish:

I think it’s a wonderful commitment, and I appreciate your time and your thoughtful presentation.

Hu Jiaqi:

There is a saying in China: “Gentlemen seek harmony but maintain diversity.” The same principle applies here. You may think something is correct, someone else may think it is not—that is fine.

After talking with you, Professor, for so long today, the greatest consensus we have reached is that science and technology must not recklessly forge ahead without caution. Rushing forward carelessly could pose a threat to the holistic survival of humanity. With that consensus, I believe we have the foundation to become friends.

I know your time is very tight. Right now, according to the schedule, we have about two minutes left. We can set aside the formal discussion.

I hope we can communicate more in the future. I would also like to leave our contact information. If any issues arise in the future, we can discuss them by email.

If you ever come to Beijing, and there is anything I can help with, please don’t hesitate to ask.

Contact Person: Reese Jin

Company Name: Humanity Issues Research Institute

City: Los Angeles

Country: U.S.A

Website: en.savinghuman.org

Email: shao@savinghuman.org